Hacking for Defense - JIDA Design Sprint

About H4D

Hacking for Defense is a joint collaboration between the Department of Defense’s (DoD) Defense Innovation Experimental Unit (DIUx), Stanford, and BMNT. Hacking for Defense was conceived as a way for the DoD to address pressing problems in the military by harnessing lean methodologies developed in Silicon Valley.

Challenge

Our challenge was to improve data presentation to the Joint Improvised thread Defeat Agency (JIDA) analysts in a way that facilitates discovery.

My Role

I was embedded on one of three teams working on the project and was tasked to bring my design expertise as well as outside perspective to the problem.

Summary

JIDA challenged us with finding a better way to visualize data that facilitates discovery. By framing up the problem within a week long design sprint we delivered a prototype that will:

- Decrease time spent gathering data

- Make aggregation of data into a final report easier

- Increase time available for analysis of data

Check out the prototype or see it in action below.

Design Process

In working through this challenge we utilized a modified version of Google Venture’s design sprint.

The following case study details each stage of this process and how we went from problem to prototype in five days.

Day 1 - Problem

Through questioning we discovered that a majority of an analyst’s time is spent discovering and gathering data from multiple databases with proprietary systems and interfaces. Moving data between these systems is difficult and analysts are hesitant to use tools and databases they aren’t familiar with because of the disparate interfaces.

By the end of the week our problem statement read:

“Relevant improvised threat information is not intuitively communicated in real-time to meet JIDA analyst needs.”

We referenced this problem statement constantly the rest of the week making sure we were addressing our end user, JIDA analysts, and addressing the problem they face.

Creating our problem statement and mission model canvas.

Based off our problem statement we began creating a mission model canvas. A mission model canvas is like a lean business canvas but modified to meet the needs of mission driven organizations where the goal of a design isn’t to earn money but to fulfill a mission.

Click to zoom

Click to zoom

Day 2 - Diverge

We spent day two exploring possible solutions to the problem statement through rounds of sketches and crazy 8s.

Day 3 - Converge

Before narrowing our solutions to one idea our prototype would be based on we created a critical hypothesis that our prototype would prove or disprove:

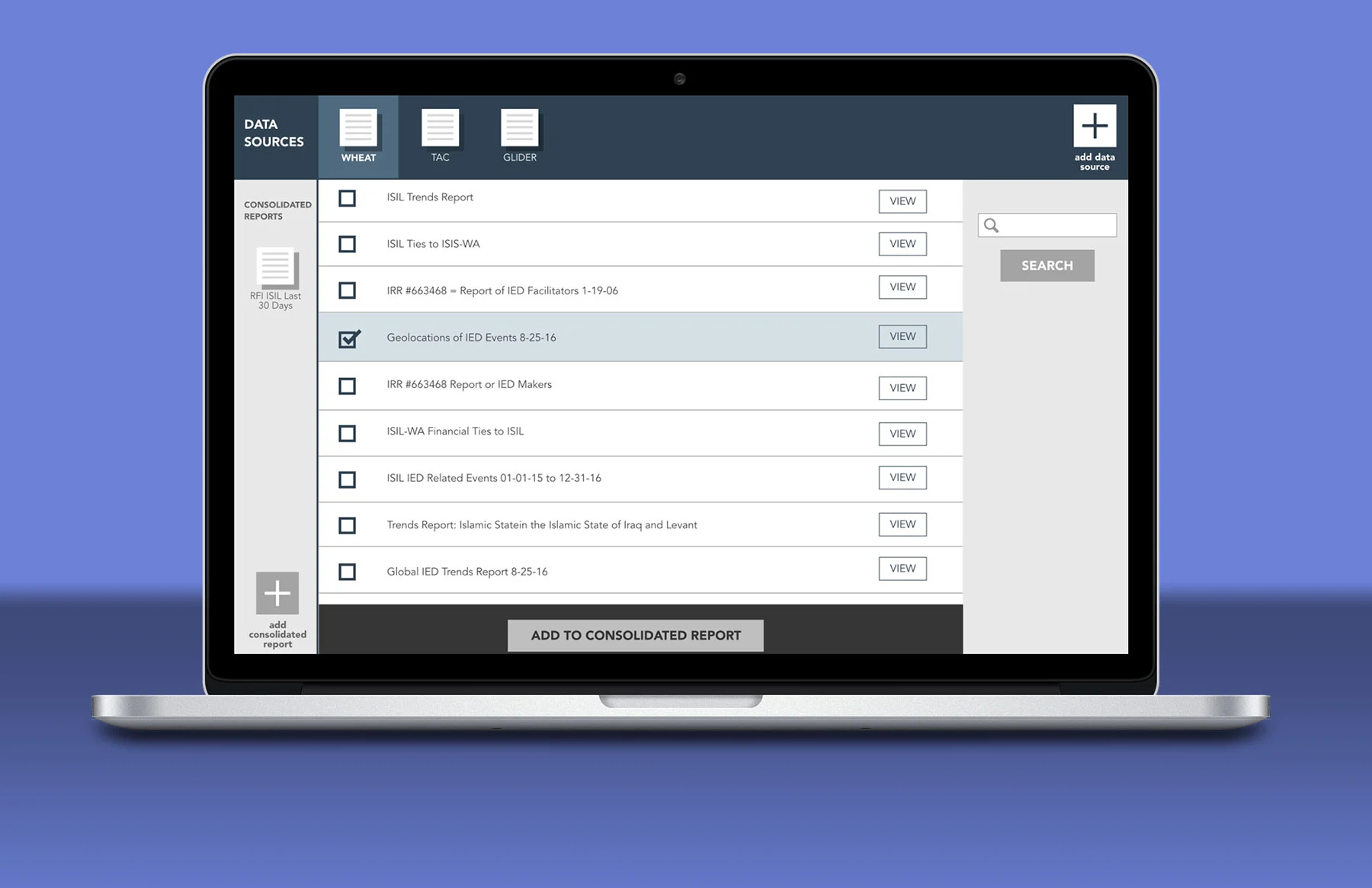

“If an analyst can manipulate several data sources in a dashboard than analysis will be easier because there will be less siloing of data and increased interface standardization.”

With our critical hypothesis in place we began planning for our prototype.

Day 4 - Build

I started prototype building by questioning the analysts to check my assumptions about their workflow.

Once I understood their workflow I worked with them to develop some rough sketches of what the dashboard might look like and how it might function.

Prototype sketches

I then put together a list of the tasks we would ask of participants to compare the efficiency of our design to their current system.

Add GLIDER database to the dashboard.

Pull the geolocations of IED events on August 25, 2016 from WHEAT, and add it to the consolidated report.

View the source data for the image that you just added to the consolidated report, IED events on August 25, 2016, from the WHEAT database.

Edit the source map.

And finally I built out the prototype. Check out the complete prototype here.

Day 5 - Pitch

We presented our problem statement, mission model canvas, critical hypothesis, and prototype to high ranking members of DIUx. They were excited about the prototype and saw that it had usefulness that extended beyond JIDA and across the entire intelligence community.

Usability testing is commencing soon. This case study will be updated once I have results.